In the past couple of weeks, I’ve been asked about methods to capture and track important or essential performance parameters, generally referred to as “technical measures” in a model-based environment. This post is dedicated to understanding technical measures and how to identify them in the operational and system architecture.

In my research on this topic, I have found two general approaches to key performance parameter (KPP) identification and management. One approach is documented in the INCOSE Technical Measurement Guide (Garry Roedler and Cheryl Jones, Dec 2005, INCOSE-TP-2002 -020-01). The INCOSE publication was authored in 2005 and based on a set of Department of Defense definitions in use at the time. Since this publication, the Department of Defense management of technical measures has evolved, introducing new terms and definitions. The latest thinking by the Department of Defense can be found on the Defense Acquisition University (DAU) writings accessible through the DAU Acquisition Notes Website. The INCOSE Technical Measurement Guide provides several definitions for technical measures, as follows:

Measure of Effectiveness (MOE) – the “operational” measures of success that are closely related to the achievement of the mission or operational objective being evaluated in the intended operational environment under a specified set of conditions; i.e., how well the solution achieves the intended purpose

Key Performance Parameter (KPP) – a critical subset of performance parameters representing those capabilities and characteristics so significant that failure to meet the threshold values of performance can be cause for the concept or system to be reevaluated or the project to be reassessed or terminated

Measure of Performance (MOP) – the measures that characterize physical or functional attributes relating to the system operation, measured or estimated under specified testing and/or operational environment conditions

Technical Performance Measure (TPM) – TPMs measure attributes of a system element to determine how well a system or a specific system element is satisfying or expected to satisfy a technical requirement or goal.

Researching this topic today through the DAU website, you will find reference to Critical Operational Issues (COIs). A COI is an operational effectiveness or suitability issue that must be examined in operational test and evaluation to determine a system’s capability to perform its mission. Notice two things about this definition: first, the definition is focused on operational effectiveness, and second, the verification occurs during operation test and evaluation. The focus is on an operational context together with reference to satisfactorily completing a mission.

COIs provide us a connection to a mission area; in other words, for a particular mission, the operational architecture should relate to a COI.

The DAU website provides definitions of additional measures as follows:

Measure of Effectiveness (MOE) – MOEs are measures designed to correspond to accomplishment of mission objectives and achievement of desired results. MOEs may be further decomposed into Measures of Performance (MOP).

Measure of Suitability (MOS) – measure an item’s ability to be supported in its intended operation environment.

Measure of Performance (MOP) – measure a systems performance expressed as speed, payload, range, time-on-station, frequency, or other distinctly quantifiable performance measures (such as measures related to particular support characteristics including reliability, maintainability, supportability).

TPMs, in turn, refine the defined MOPs to further identify individual system element performance.

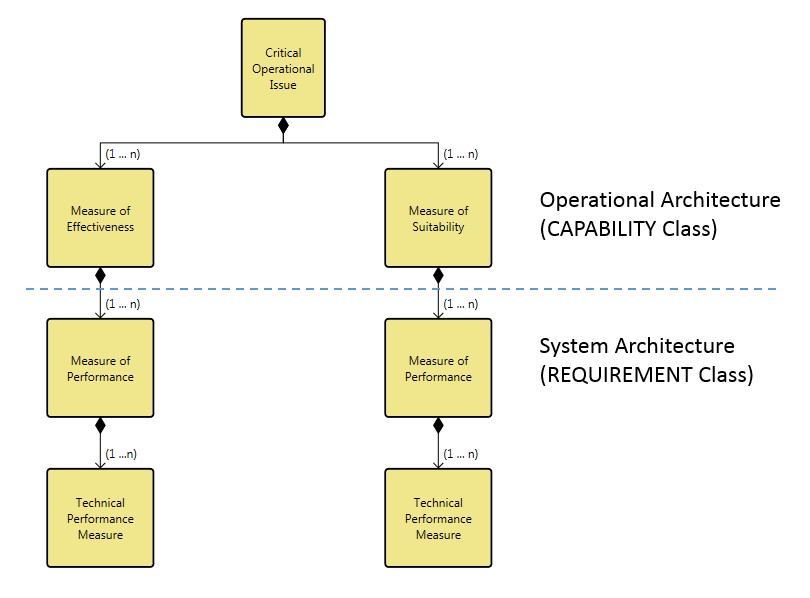

So, following the logic of the latest definitions from DAU, a mission area can be related to a COI and a COI is broken down (or further refined) by one or more MOE or MOS. And, each MOE or MOS is further refined by one or more MOP (at the system architecture level). Finally, a MOP can be further refined by TPMs on individual elements below the system architecture level.

Furthermore, if you look at each definition carefully, COI, MOE, and MOS refer to the operational architecture level. And, MOPs and TPMs apply to a system level architecture (i.e. the system architecture or systems which implement the operational architecture).

The hierarchy of the technical measures can be visualized as follows:

Defining the technical measures can easily be accomplished in the CORE or GENESYS modeling environment. Because some of the measures apply to an operational architecture and some of the measures apply to a system architecture, you need to be utilizing the tool’s Operational Architecture schema.

Both the CAPABILITY and REQUIREMENT class have two attributes that allow us to identify technical performance measures. One attribute is named “Key Performance Parameter,” and right below this is the attribute named “Performance Parameter Type.” The Key Performance Parameter attribute is a Boolean data type, which means it is either True or False (and the default value is false). The “Performance Parameter Type” is an enumerated list with a pre-set list of choices.

An absolute definition of performance parameters does not exist and how you use this concept may vary from organization to organization or project to project. So, one of the first things you must do for your project is to confirm the selection and definition of technical measures with your customer. Then you must modify the schema to tailor the list of choices for “Performance Parameter Type” on both the CAPABILITY and REQUIREMENT classes.

For those entities in either the CAPABILITY or REQUIREMENT class you desire to be a technical performance measure, you can switch the Key Performance Parameter attribute to be ‘True.’ Then, you can select the type of performance parameter to identify an individual entity.

Once you have identified your technical performance measures, you can filter either the CAPABILITY or REQUIREMENT class based on either of these attributes and create either a custom hierarchy or table export to provide traceability or review of the entities.

In summary, you can easily identify and manage technical performance in either CORE or GENESYS. The first step to including technical performance measures (as in most system engineering management situations) is to have a discussion with your customer to plan and confirm what is needed in the project. Collectively, settle on the names and definitions for the technical performance measures you must track. Then, edit the attributes for the CAPABILITY and REQUIREMENT classes to capture the names and definitions. And, finally, capture the requisite technical information in the model and use the filter, hierarchy, and excel connection features to document the measures.

Thanks for this explanation. A helpful read.

My CORE project schema does not have the “Performance Parameter Type” attribute on Requirement or Capability. It does have a class called Performance Characteristic. Should this class have “Performance Parameter Type” or equivalent? Since it has attribute “timeframe”, I seek to understand whether or not this class can be useful for monitoring how well a system or a specific system element is satisfying or expected to satisfy a technical requirement or goal.